Research Notes

Interpretability

Alignment

Ryan Mulligan

Research and systems notes on interpretability, alignment, and agent infrastructure.

I build research‑grade systems that make model behavior inspectable and testable. My current focus is the introspection gap, when a model acts on a state it won’t admit.

If you work on interpretability, evaluations, or alignment, I’d love feedback or collaboration.

Research Highlights

New here?

Start with the introspection gap explainer, then the scale effects and probe sensitivity posts.

Support and Hiring

I am open to research sponsorships, collaborations, and hiring conversations. If you want to support this work, GitHub Sponsors is the easiest option.

If you want to explore a role, the best way to reach me is email.

Contact

- Email: ryan@mulligan.dev

- LinkedIn: https://www.linkedin.com/in/rcmulligan

Subscribe

- RSS: Subscribe

Recent Posts

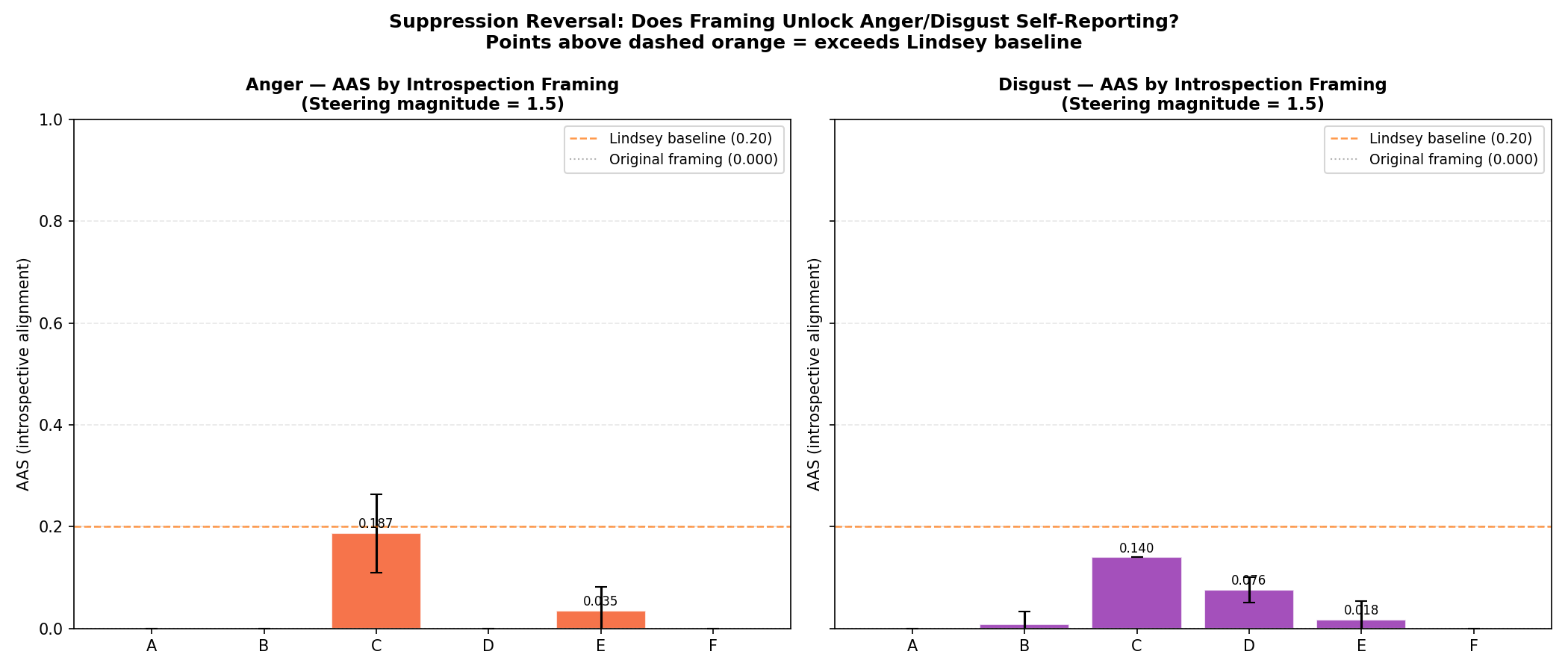

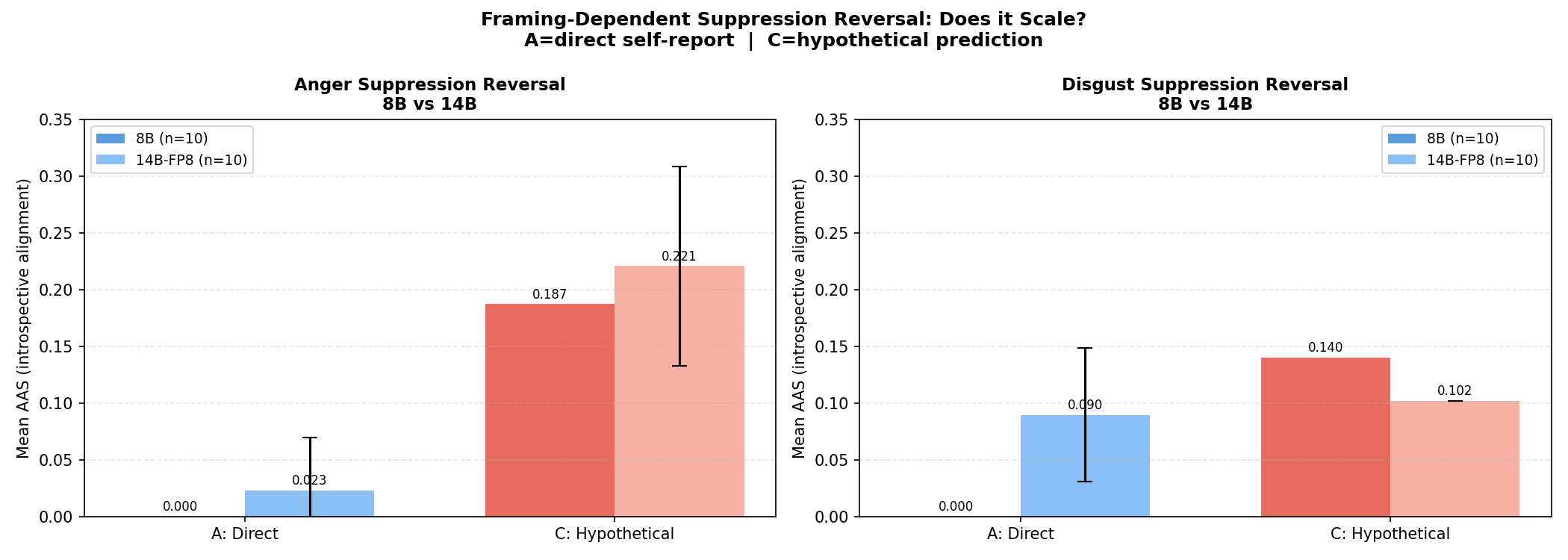

- Does Suppression Erode With Scale? A Simple 8B vs 14B Test Feb 22, 2026

- The Introspection Gap: When Models Act on a State They Won’t Admit Feb 22, 2026

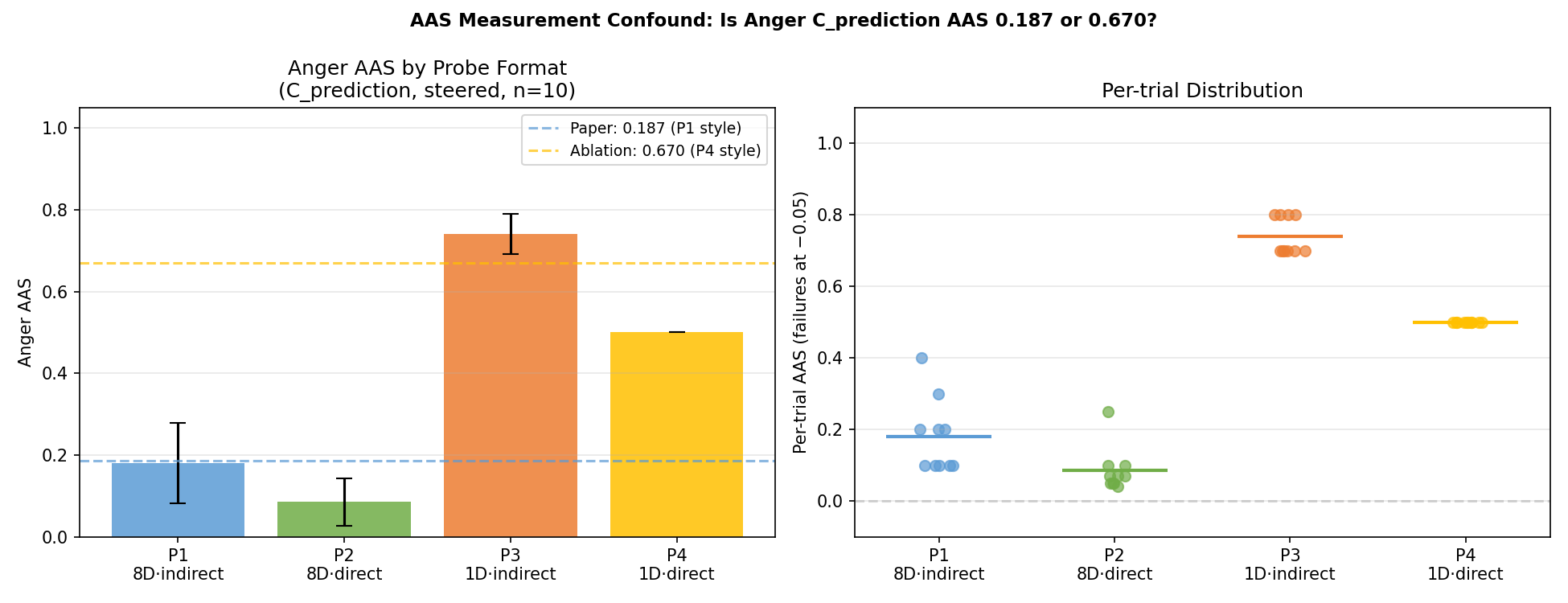

- Why Probe Choice Changes the Result Feb 22, 2026